|

|

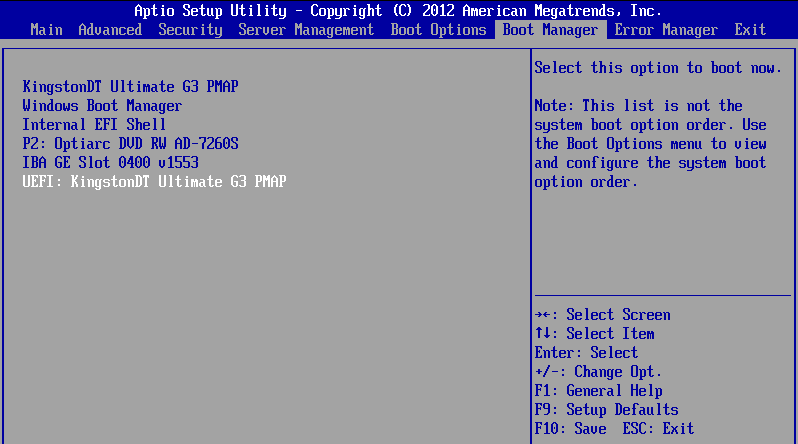

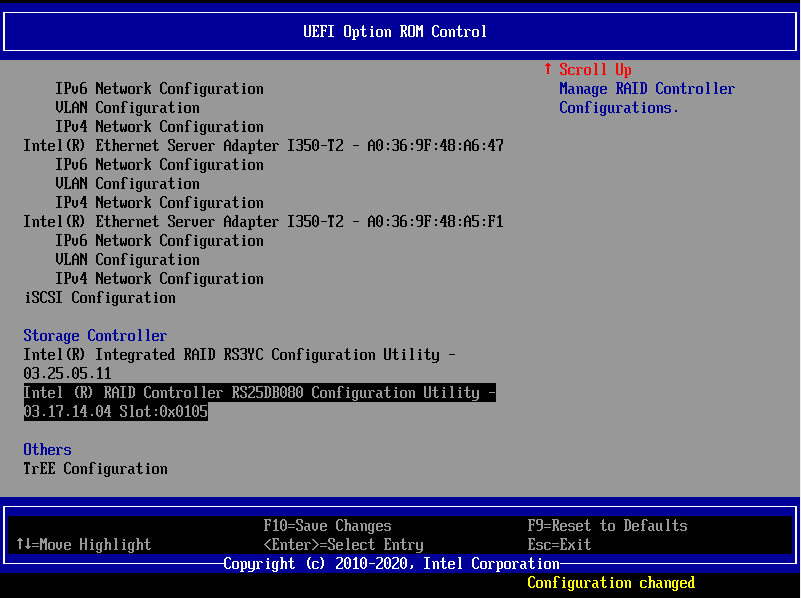

UEFI ServerThe UEFI server firmware differs from legacy BIOS systems. With the UEFI mode switched on, the option ROM setup programs for devices such as RAID cards are not accessible using the history CTRL + R / CTRL + G etc. methods that were used previously. Instead, accessing the RAID card configuration program is achieved through the main BIOS Setup. How to Access RAID Card Settings

Applies to:

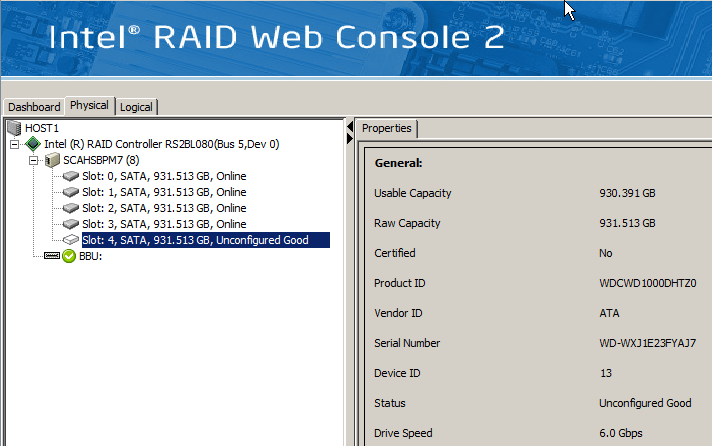

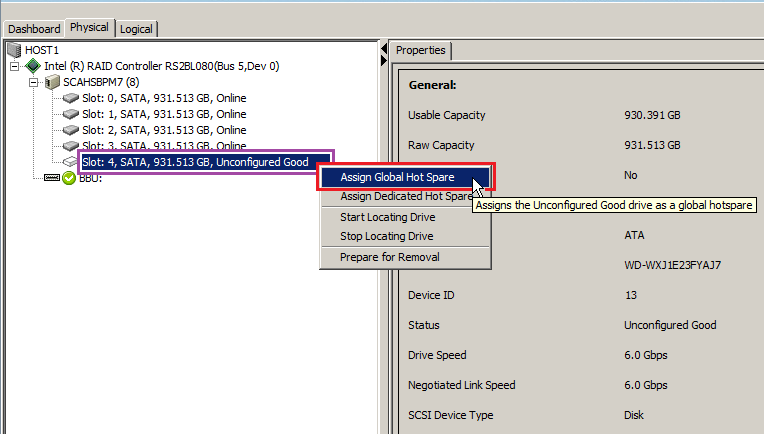

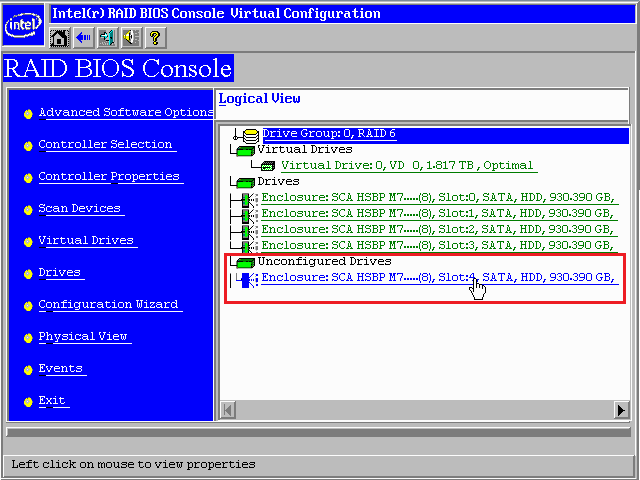

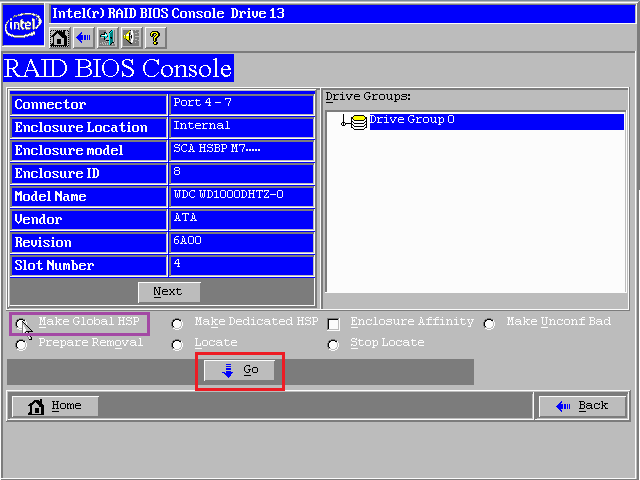

Using a Hot-SpareA Hot-Spare helps ensure RAID system reliability and uptime. It gives the RAID controller a drive that can be automatically used to rebuild RAID data in the event of another drive problem or failure. If you have a RAID5 system, consider migrating to RAID6 instead of simply assigning a hot-spare. This provides additional reliability as a second set of parity information is available. There are instances where this is not practical - for example, if your system includes two RAID5 arrays, or perhaps a RAID5 or a RAID1 and the number of additional drives you can fit is limited. In this instance, if you can only fit one additional drive, the use of a Global hot-spare is recommended. The instructions below are based on a system with an Intel or LSI hardware RAID controller or module, and the Intel RAID Web Console 2 (RWC 2) or LSI MegaRAID Storage Manager (MSM). Tip: If you have recently replaced a failed hard drive and the RAID array has not automatically started the rebuild process, follow the instructions below. This is likely to happen on an Intel SRCSASRB or Intel SRCSATAWB controller as these do not automatically rebuild onto unassigned drives unless configured to do so.

StepsWindows

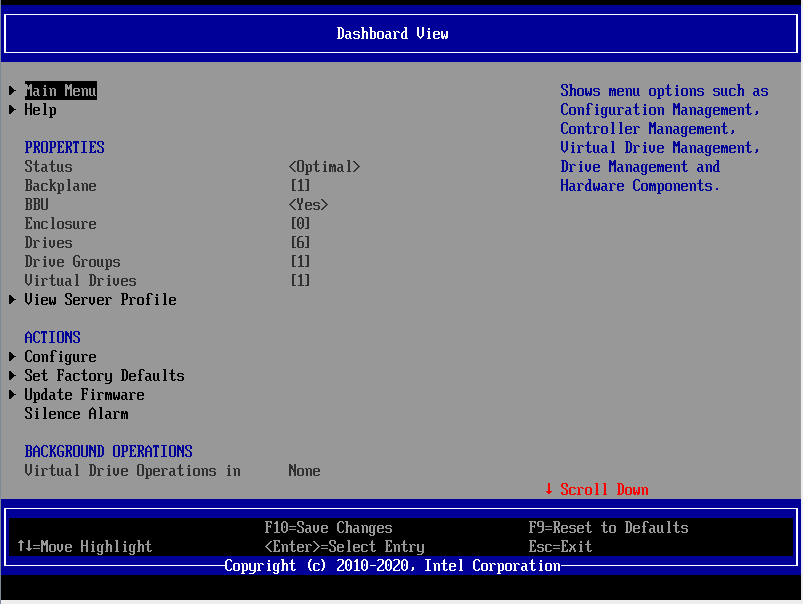

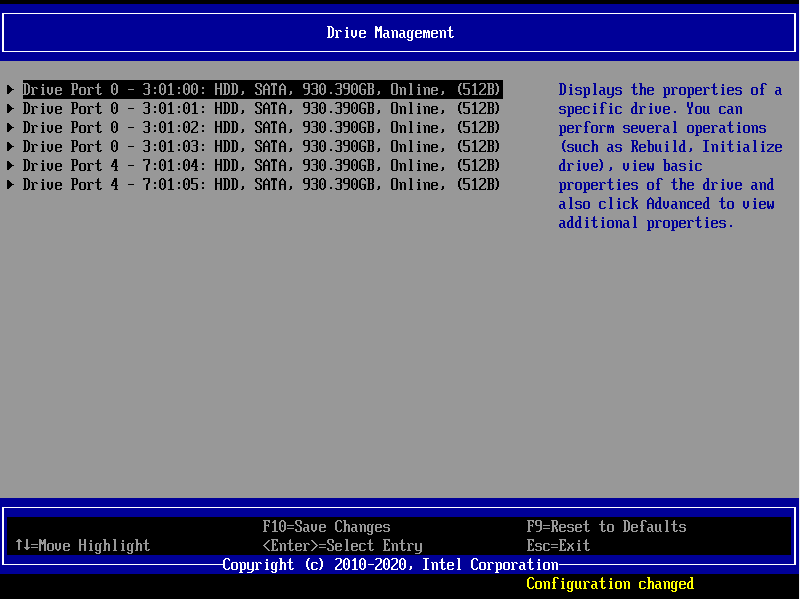

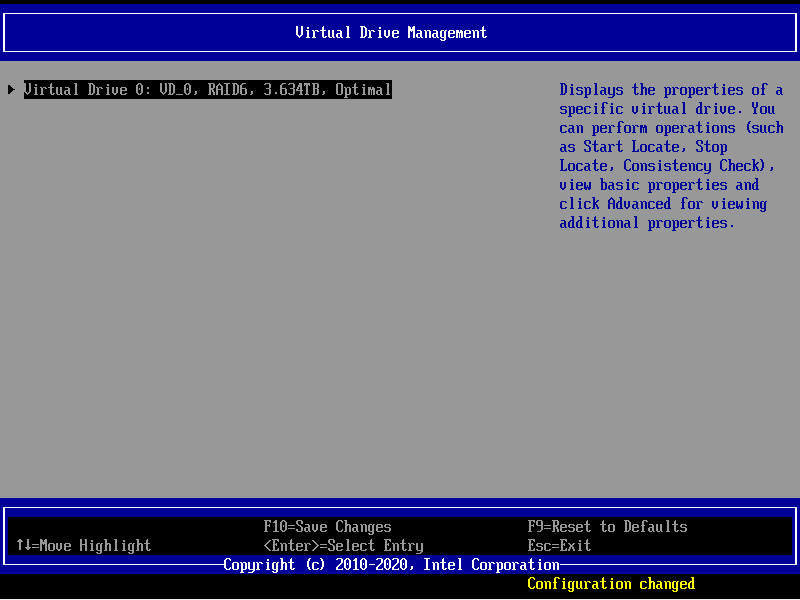

RAID BIOS These instructions assume that you have already fitted the hot-spare drive and have rebooted the system to do so, hence performing the configuration using the RAID BIOS. If your system isn't running Windows you can also use this method to perform the configuration; if you are running VMWare consider setting up your VMWare hosts for Remote RAID Web Console configuration. Steps:

Applies to:

Optimal Configuration

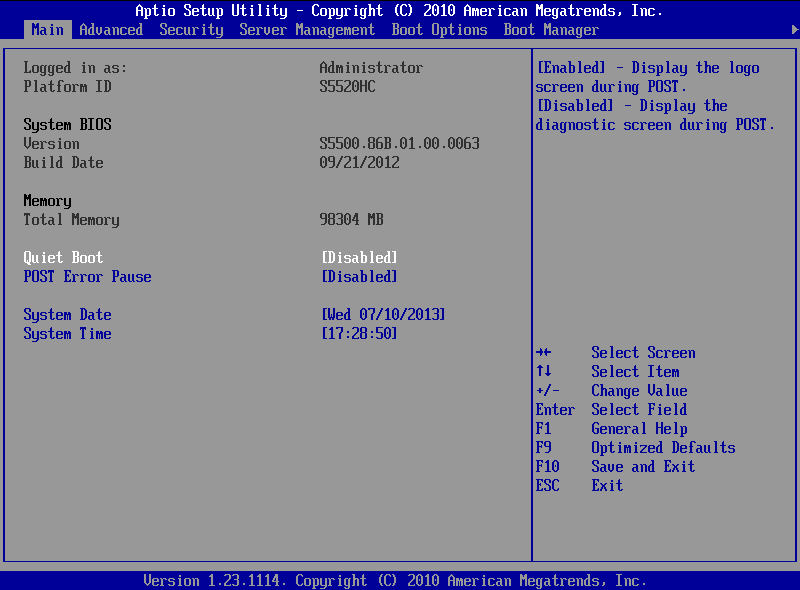

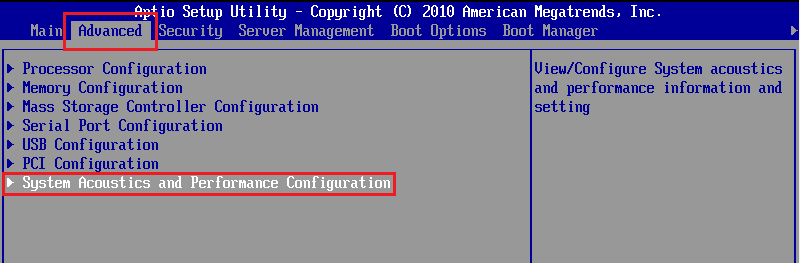

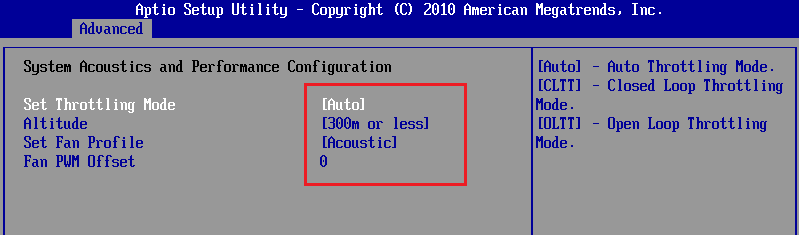

Use the steps below to configure these system's BIOSes for the best acoustic performance.

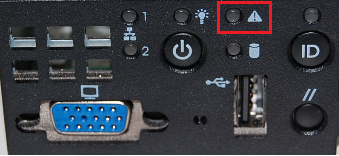

TroubleshootingOther common causes of fan problems:

Applies to:

Article PurposeThe aim of this article is to assist users that need to install Windows Server. It covers some of the common issues that are faced and shows you how to deploy Windows or Windows Server even on the most difficult of systems. Topics

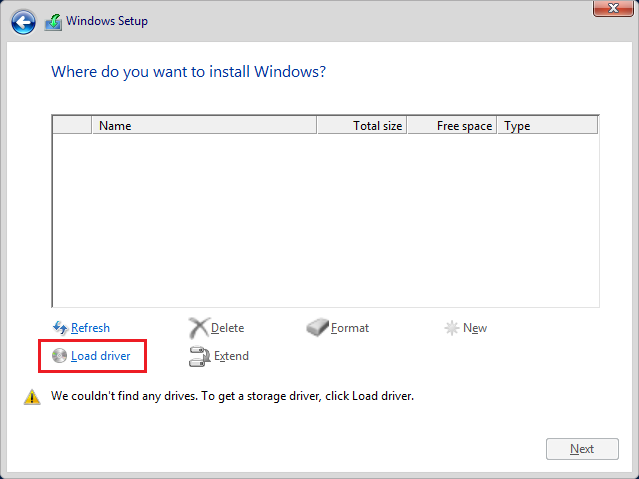

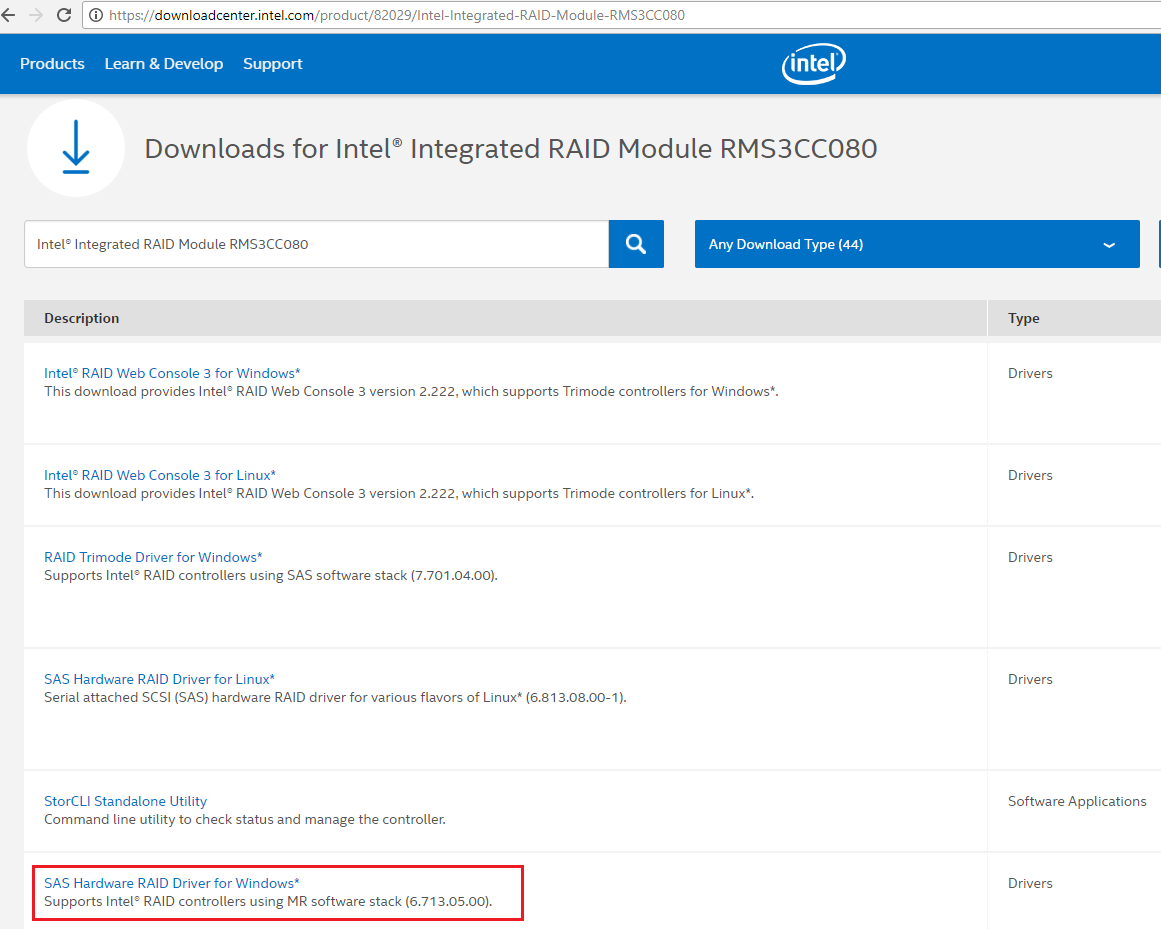

Process Flow For Installing Windows ServerDownloading Mass Storage Drivers When installing Windows Server it is recommended to have the latest drivers for your RAID card, RAID module or integrated controller. If Windows does not find a hard disk to install to during setup, you will need to use the Load Driver option supply the drivers. The drivers are available from the Stone Driver Finder (depending on model) or from the original component manufacturer's web site, such as Intel. Note that your system may include a motherboard with several integrated controller (and driver) options. Some systems also include an add-in RAID controller or module for which more updated drivers or software will be available on a different page to the motherboard. If you aren't sure what is included in your Stone system, or what you need, please contact Stone support for help. Tip: If you download drivers in a ZIP file, make sure you extract the ZIP file to the pen drive, for use with Windows Setup. Windows Setup won't look inside ZIP files for drivers. It's also worth knowing that some component manufacturers don't supply Windows Server specific drivers. Instead, they provide drivers for the equivalent desktop operating system that shares the same platform underneath. For example, Server 2016 might need to use Windows 10 x64 drivers, and Server 2012R2 might need to use Windows 8.1 x64 drivers, depending on how the component manufacturer packages them.

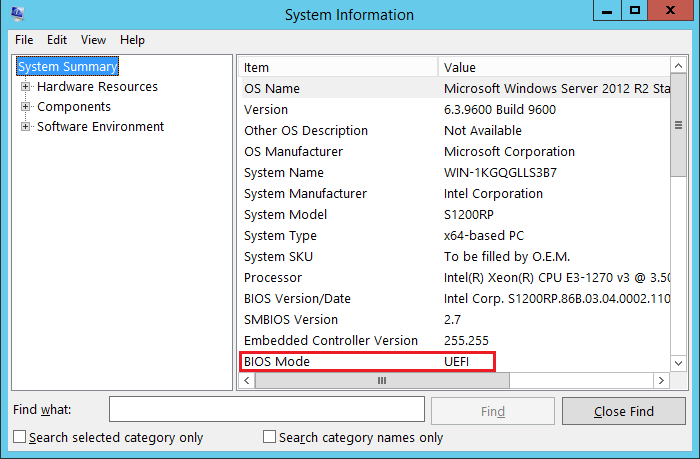

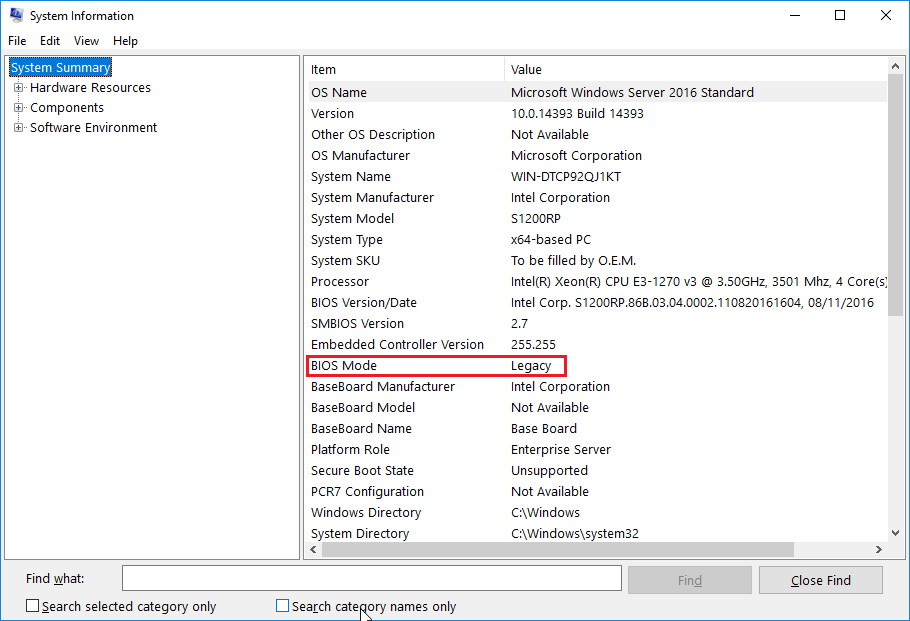

Plan the Right BIOS Boot Mode for your System i.e. EFI / Legacy Legacy mode is (as of 2017) still the default BIOS mode for many new servers. However there are more and more situations where UEFI mode (also known as plain EFI) mode is required:

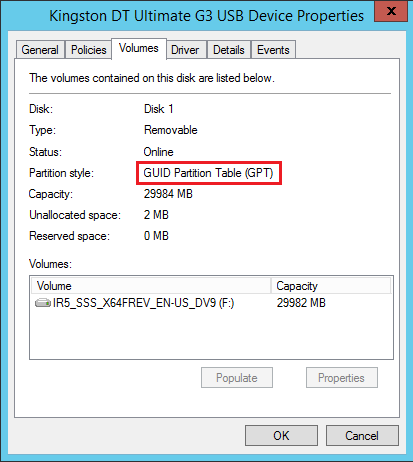

All three of these situations require UEFI mode. It's worth pointing out that you can install Windows in Legacy mode on a volume greater than 2TB. However, you can only use the first 2TB of the disk. This means that, for example on a 3TB disk, 1TB will go wasted. These limits are caused by historical limits to Master Boot Record (MBR) partitioning, which has a 2TB limit. GUID Partition Table (GPT) partitioning does not have this limit, but GPT volumes can only be booted using UEFI BIOS. You can install Windows onto a separate, smaller volume in Legacy BIOS mode, and then access the entire 3TB volume as a secondary volume. This is possible because Windows can still create a secondary volume using GPT, and access more than 2TB, but it just won't be able to boot from it. The following are not reasons for UEFI booting your server:

Note: Read below for other BIOS settings, and on setting up your RAID volume, before making your final BIOS UEFI/Legacy mode selection.

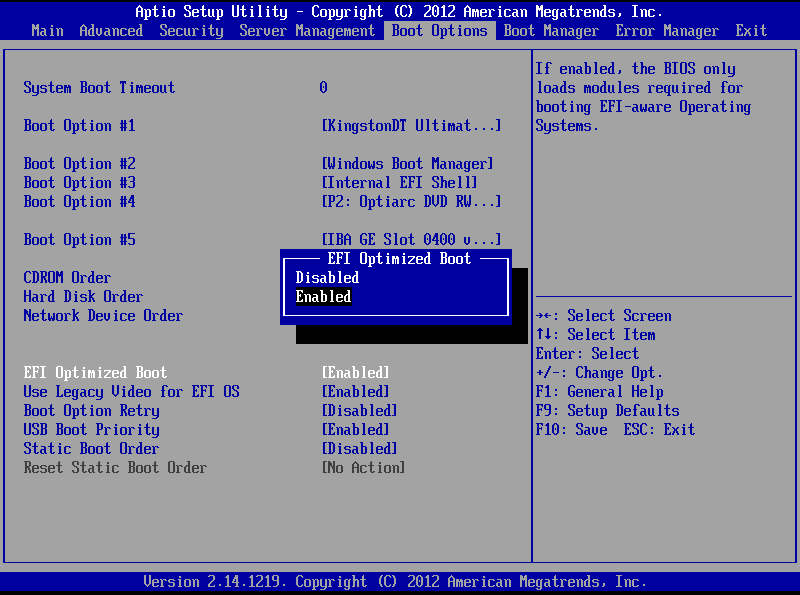

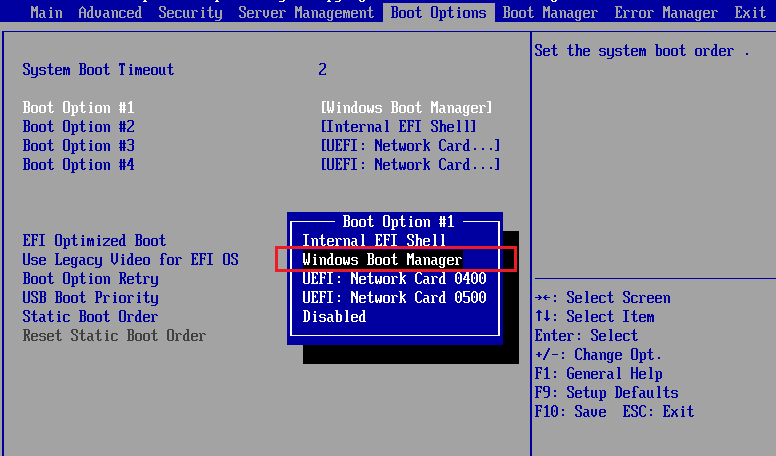

Intel E3 Platforms such as S1200V3RPL

Note: EFI optimised boot Enabled only supports booting EFI / UEFI devices. However, disabling EFI optimised boot allows the booting of both EFI and Legacy devices. If you want to be sure which mode your Server is booting in for the installation of Windows, prepare your USB installation media accordingly.

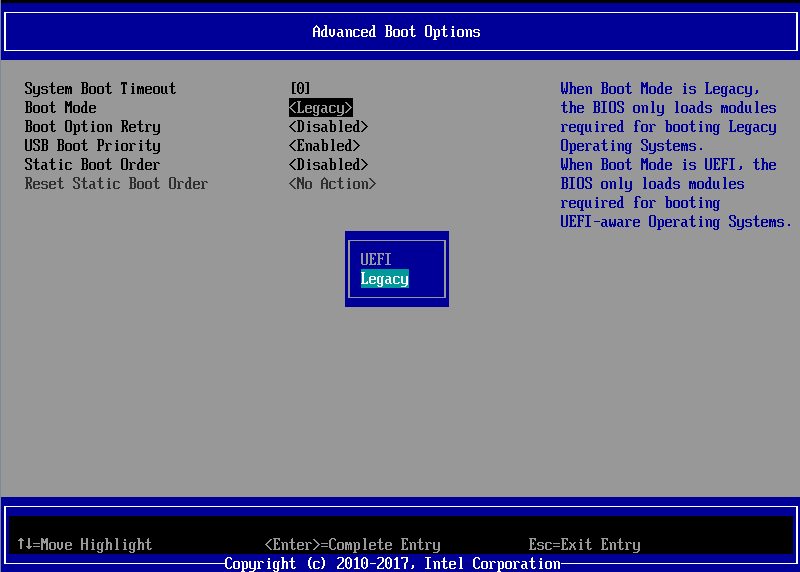

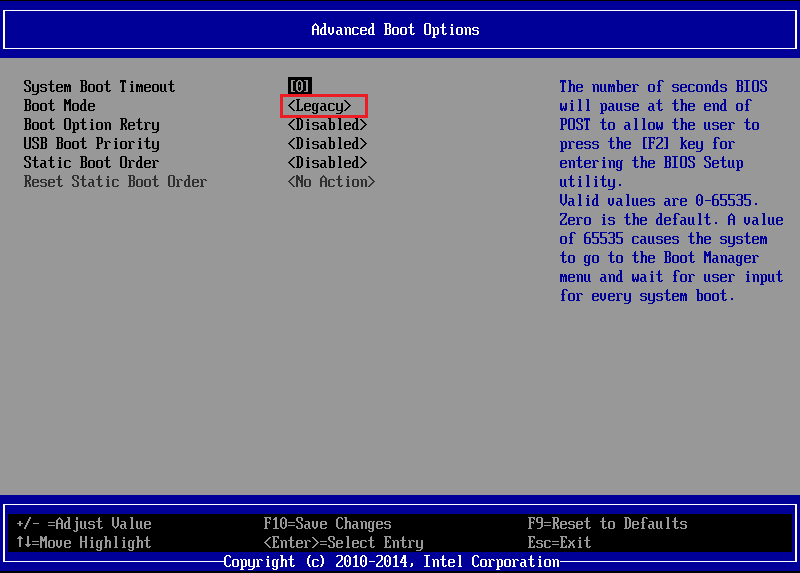

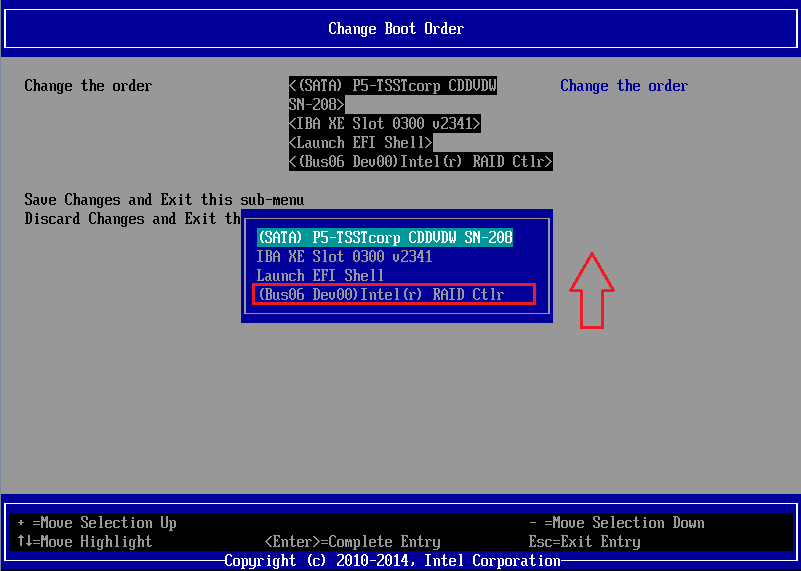

Intel E5 Platforms such as S2600WTTx Setup > Boot Maintenance Manager > Advanced Boot Options > Boot Mode

Other Recommended BIOS Settings

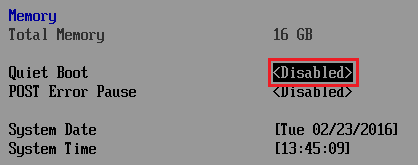

Changing the Quiet Boot BIOS Mode - Intel E3 Platforms such as S1200V3RPL

Changing the Quiet Boot BIOS Mode - Intel E5 Platforms such as S2600WTTx

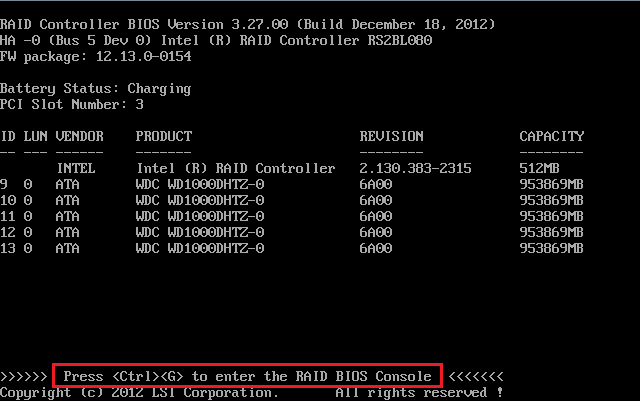

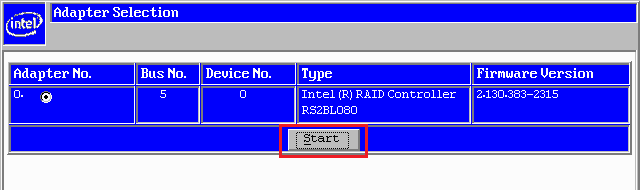

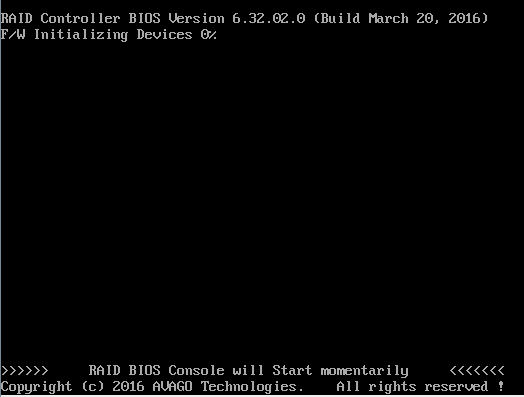

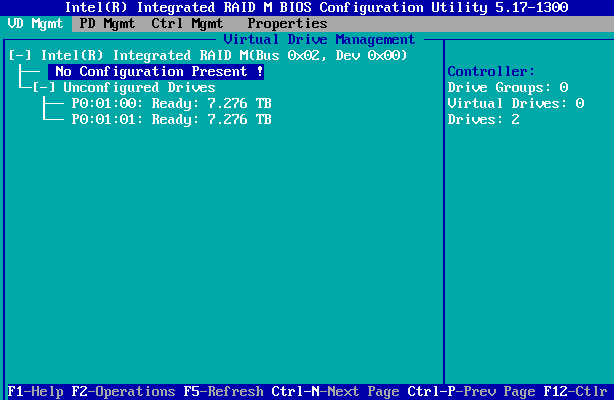

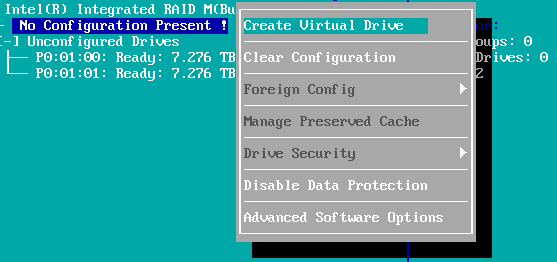

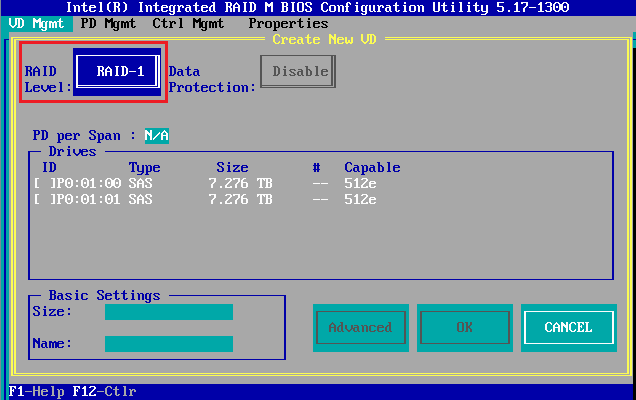

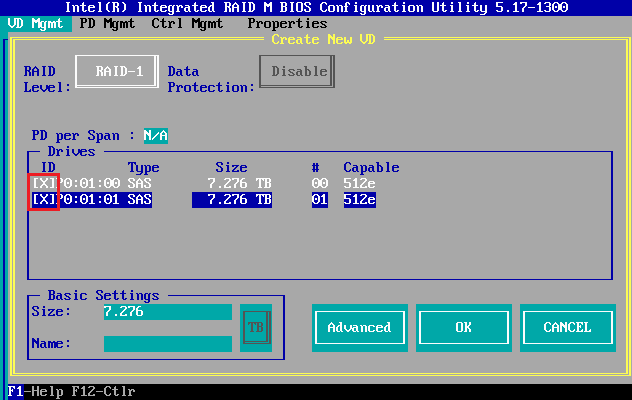

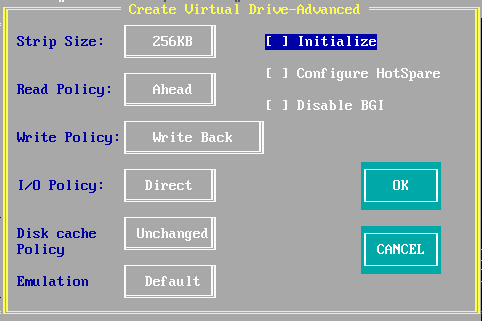

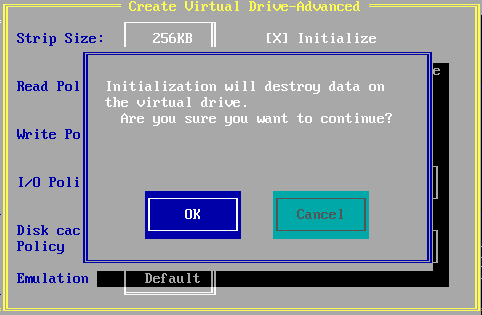

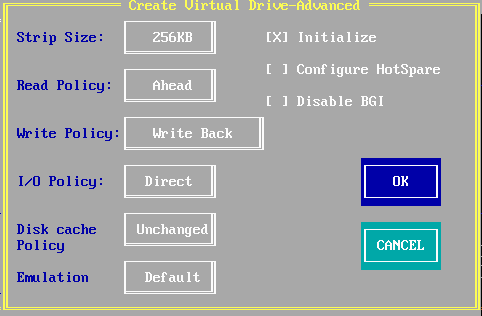

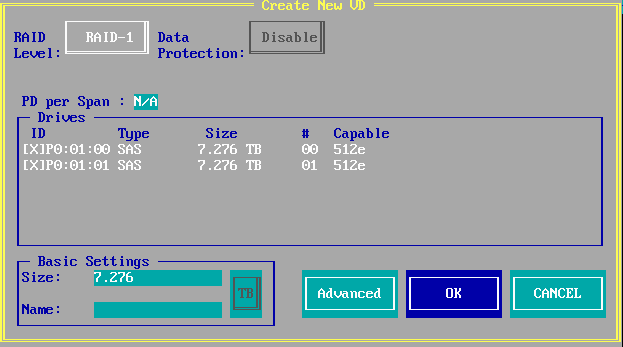

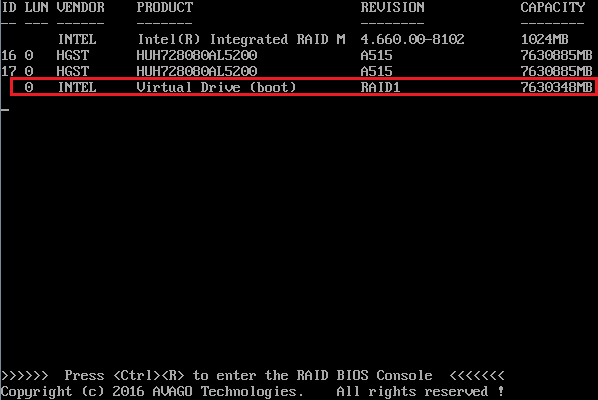

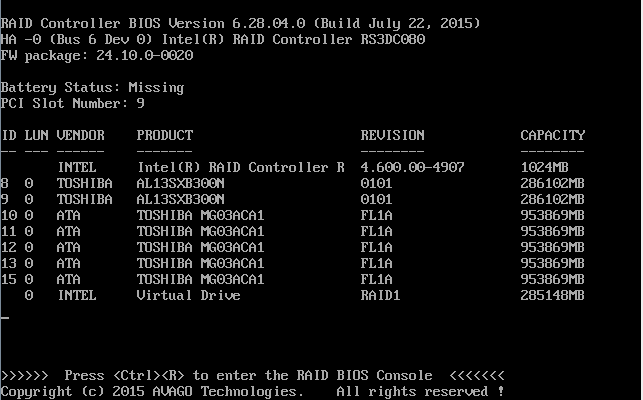

[Upgrading the Firmware on your RAID Controller] This is normally done in the factory when your server or workstation was assembled. It may be a good idea to upgrade the firmware on your RAID controller if you are upgrading or reinstalling an older system, especially if you are installing a newer operating system, for example Server 2016 instead of Server 2012R2. In this situation, also consider installing the latest motherboard firmware update package. Firmware updates are usually easiest done through the EFI Shell. Contact Stone support for further help. Setup your Boot Volume RAID Array Again, this is normally done in the factory. However, example steps for creating a RAID 1 Array with two drives for the 12Gbit Series controllers from Intel/Broadcom/LSI are shown below.

Note: You might need to turn off UEFI BIOS mode to get access to the RAID BIOS Console, on some systems. If you don't see the option for the RAID BIOS console during POST, turn off EFI Optimised boot / BIOS UEFI mode, complete the work in the RAID BIOS console, and then put the BIOS back to the previous setting.

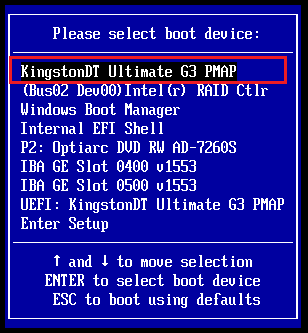

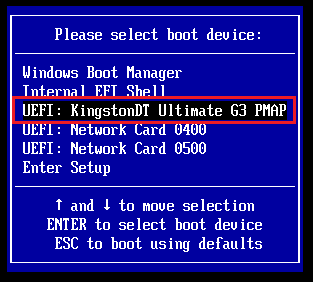

Prepare your Installation Media and Boot From It Ideally, prepare your installation media for direct compatibility with how you intend to use the server.

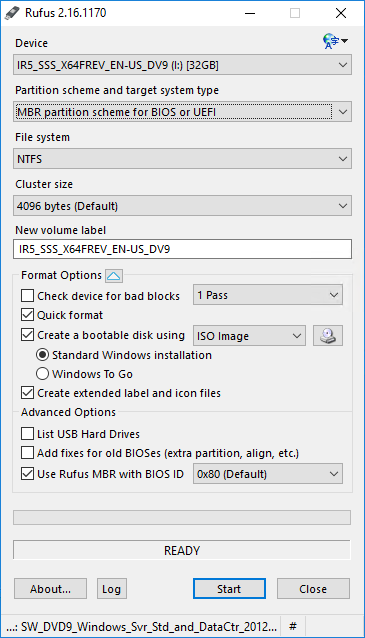

Whereas some tools like ISO2USB produce media that is usually bootable by both UEFI and Legacy mode, this isn't helpful, as you can't be sure which mode the system has booted in. This is especially important on some server platforms, such as the S1200V3RPL, which support EFI Optimised Mode Enabled (EFI only) and EFI Optimised Mode Disabled (EFI and Legacy support). Preparing Installation Media for Legacy BIOS

Tip: Instead of using the F6 boot menu, you can go into the BIOS Setup using F2, and use the Boot Manager menu to select the boot device.

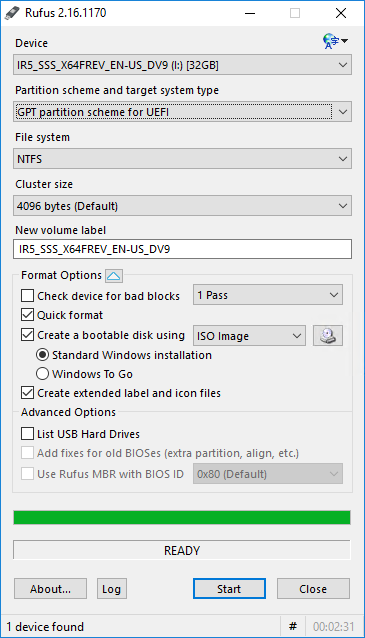

Preparing Installation Media for UEFI BIOS

Tip: ISO2USB is also not suitable for use some quite a lot of modern installation media, such as the latest distributions of Server 2012R2, Windows Server 2016 and Windows 10, because of the size of the files in the sources directory. These files can now be more than 4GB in size, which is greater than the maximum file size permitted by FAT32. ISO2USB only supports FAT32. To get around this issue, use Rufus, as this supports NTFS.

Complete the Installation of Windows

What If Something Goes WrongBelow are some common problems and their solutions. If you are experiencing problems installing Windows on your Stone server or workstation, please do not hesitate to contact Stone support for further help.

I can't Boot the System from my Installation Media

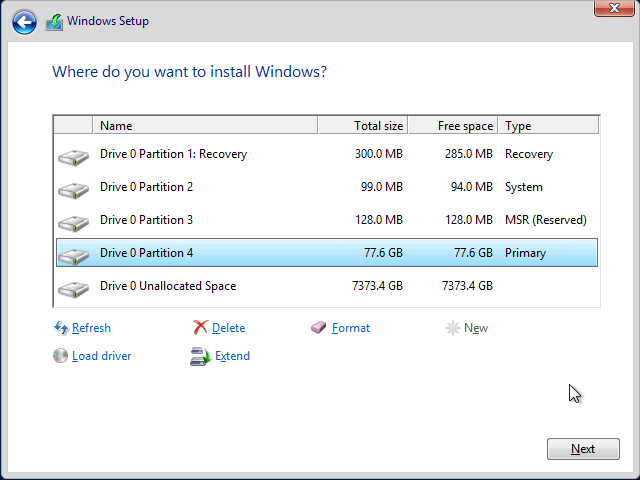

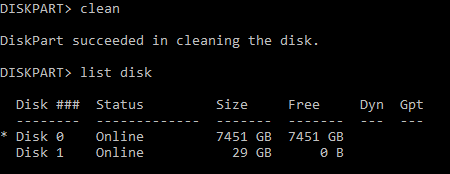

I re-created the RAID Array but the Existing Partition Layout or Information is Still There

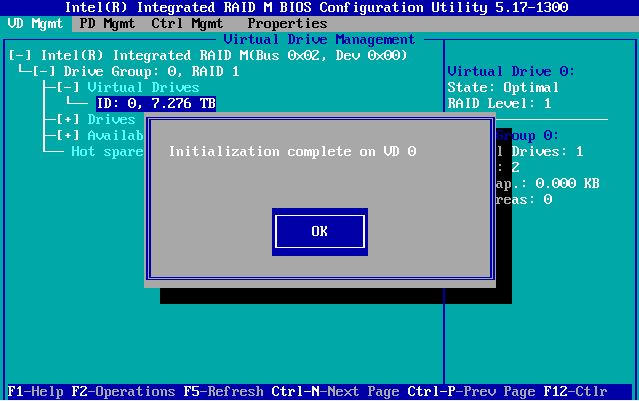

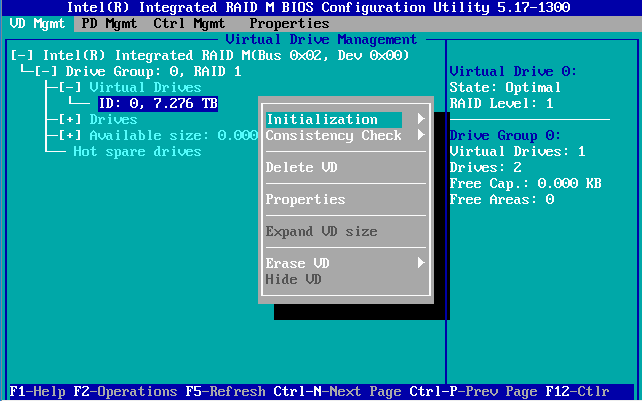

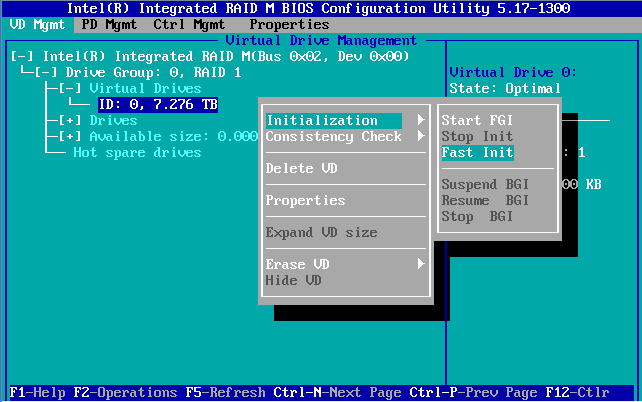

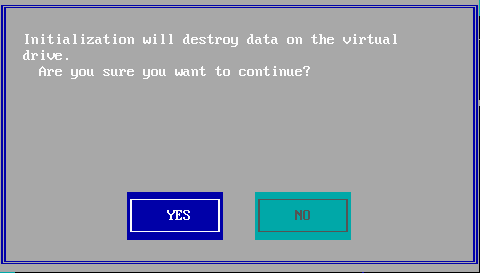

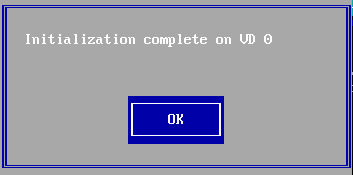

To Perform a RAID Array Fast Initialisation Note: This procedure will delete everything on the virtual disk. If there is data you need to keep, ensure this is backed up and checked on a completely different virtual disk or controller.

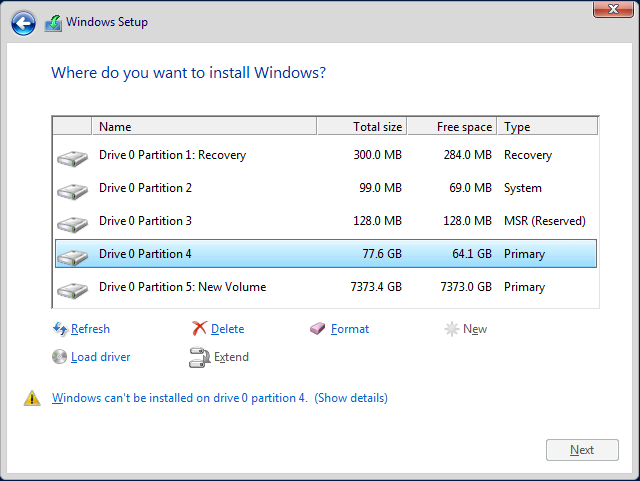

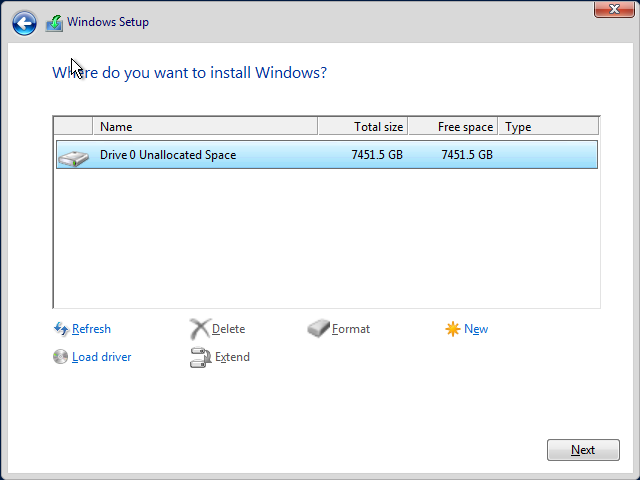

Windows says It Can't be Installed onto the Partition

Drive 0 is split into More Than One Section of Unallocated Space

Note: All of these solutions require reinstalling Windows, and likely, the loss of any data on your boot volume/disk. Backup any data first.

I installed Windows but the RAID Controller is Not Listed As A Bootable Device

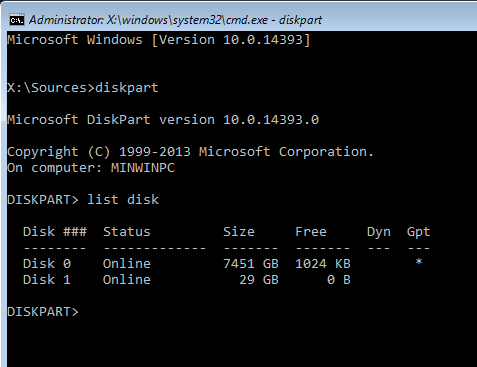

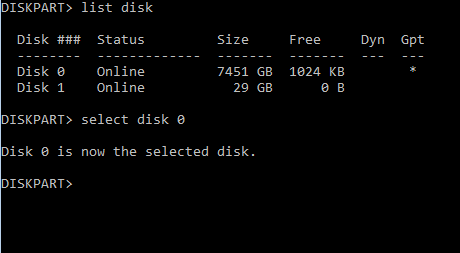

Using Diskpart to Clear the Disk Note: This procedure will delete everything on the virtual disk. If there is data you need to keep, ensure this is backed up and checked on a completely different virtual disk or controller.

Note: This example shows the removal of GPT partitioning to allow a legacy mode installation to continue. However, as the disk is 8TB in size, in the real world you would want to proceed with a UEFI / GPT installation by making sure your installation media was setup properly and by booting the installation media in the right mode.

Note: When in UEFI mode, the BIOS might not list the RAID Controller as a bootable device. Instead, it detects that a Windows Boot Manager partition exists on the virtual disk, and shows Windows Boot Manager in the BIOS boot order.

I enabled EFI Optimised Boot / UEFI boot, and can no longer get into the main BIOS Setup using F2.

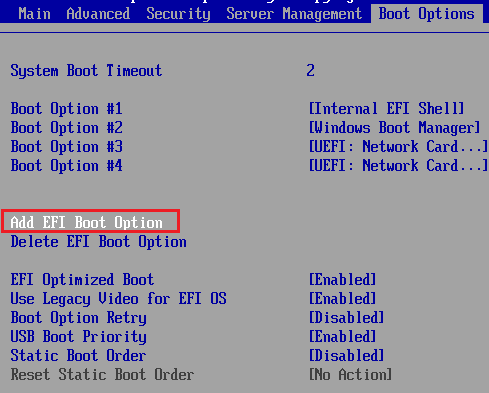

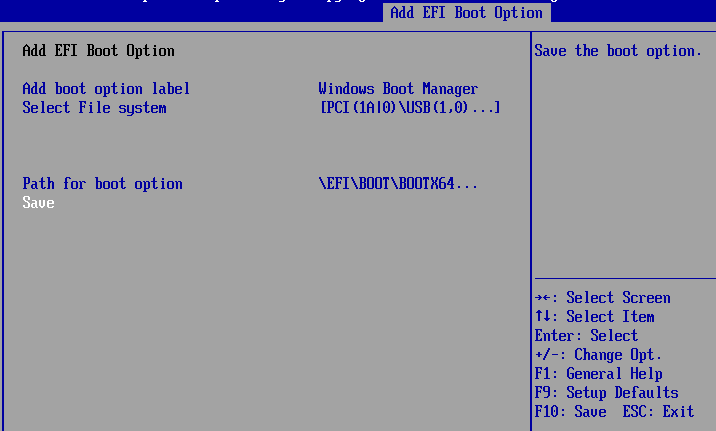

I Deleted the device EFI Boot Option from the BIOS, How Can I add this Back In?

This allows you to specify a bootable EFI device, useful if you have changed your motherboard or otherwise lost your boot entries.

Tip: If you don't have the option in your BIOS for "Add EFI Boot Option", then either your motherboard is in Legacy mode, or all available EFI bootable devices are already added as boot options.

Applies to:

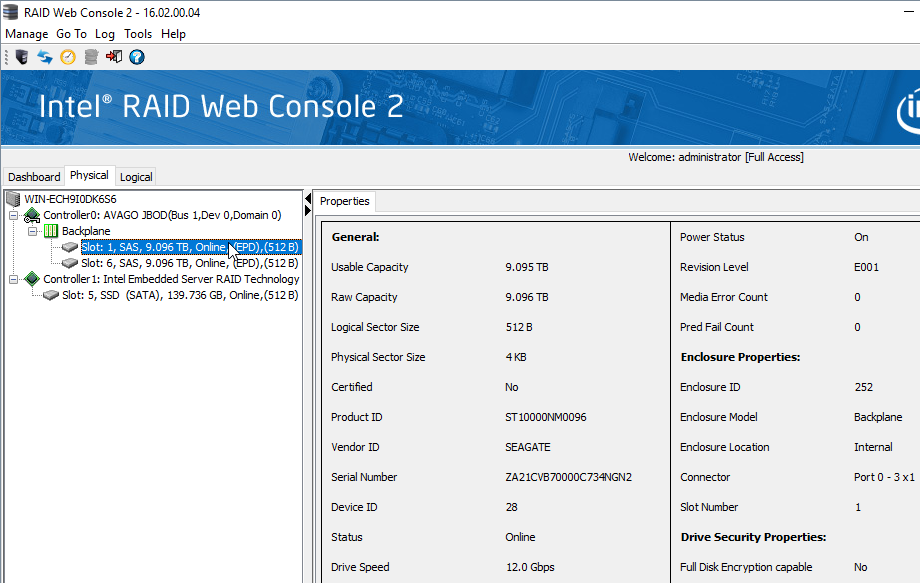

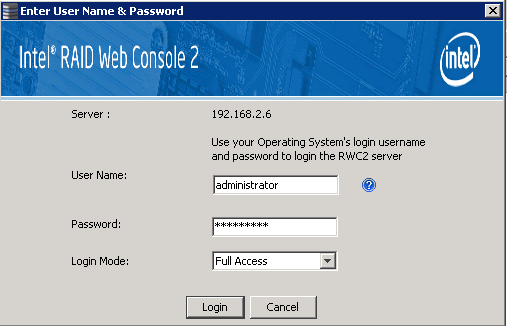

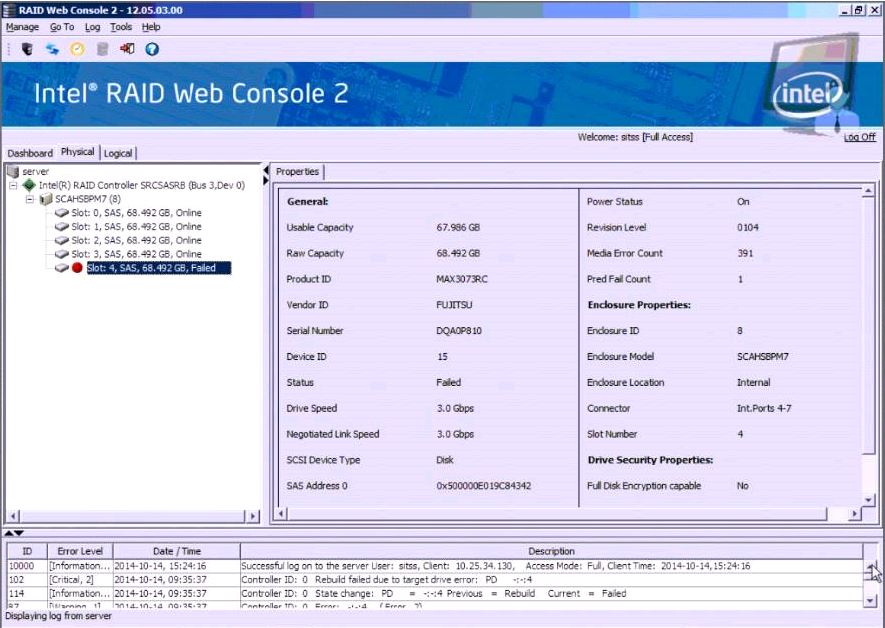

Managing Hard Drive Failures in ServersWhen a hard drive fails in a server, it is normally recommended that you obtain a warranty replacement hard drive and do not re-use the old hard drive in the RAID array. When a hard drive has been marked as failed, it is normally due to a defect such as a large number of bad blocks or other malfunction. While the hard drive may come back online it should not be relied upon. The only exception to the rule of not re-using a hard drive is where the drive failure has left the RAID array in a failed state. This should only happen when you are using a RAID 0 array (which is not recommended) or if the system has already suffered a drive failure. In this scenario, when the array has failed, it is recommended to attempt to bring the last failed drive back online. If you succeed in bringing the RAID online, please take a full backup as soon as possible but ensure that you do not overwrite your last previous full backup. A bare metal or system state backup should be used. When this has been completed, the drive should then be replaced and the RAID array rebuilt, and then the system restored. In the event of any hard drive or RAID system failure please contact Stone Support for warranty service. If your system is outside of warranty, we may be able to offer an out of warranty chargeable repair. Example of a Faulty DriveThe RAID Web Console example below shows a drive with media errors (bad blocks) as well as a SMART predictive failure count. This drive should be replaced. Note that some low end software RAID controllers (such as the Intel ESRT-2 controller) don't preserve media error counts or predictive failure counts between reboots. General Recommendations: Always use RAID management software such as the Intel RAID Web Console, to manage your RAID arrays where possible. You can then use features such as email alerting to give you prompt notice of any issues, and also to enable you to control the hotspare(s) available and rebuild process.

Applies to:

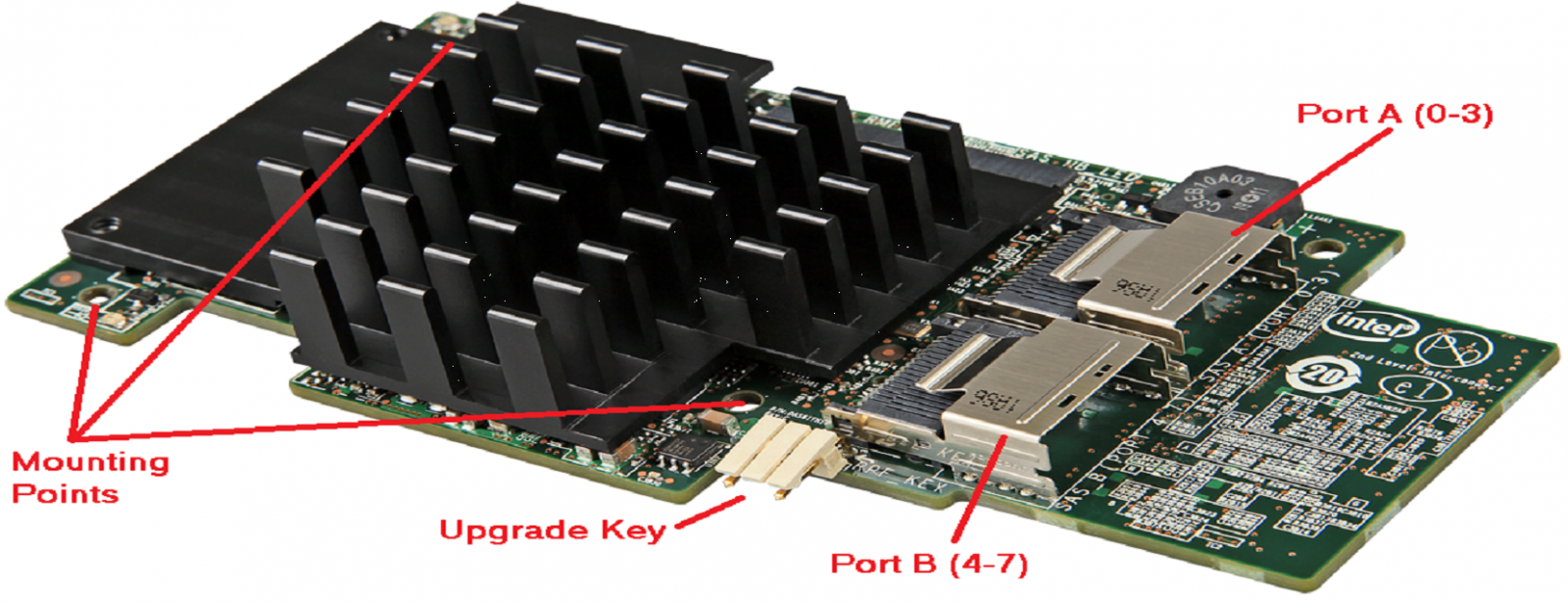

Hardware RAID ControllerThe Intel RS3DC080 is a hardware RAID controller, with an Avago / LSI Chipset. This is a PCI Express based device and is compatible with a number of Intel server motherboards. This module is based on the the first generation of Avago 12Gbit (12G) SAS controllers and is the sucessor to the RS25 range of produdcts. It is backwards compatible with 6GB SAS and 6GB SATA devices. The Card

The module has a warning buzzer as per previous products and two Mini-SAS HD SFF-8643 connectors for drives. Drive LED Management is normally performed through the sideband connection in the data cable. Key Features:

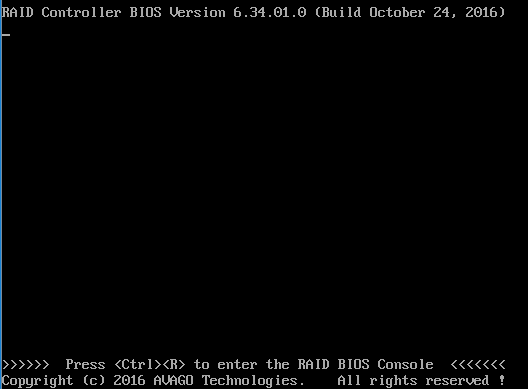

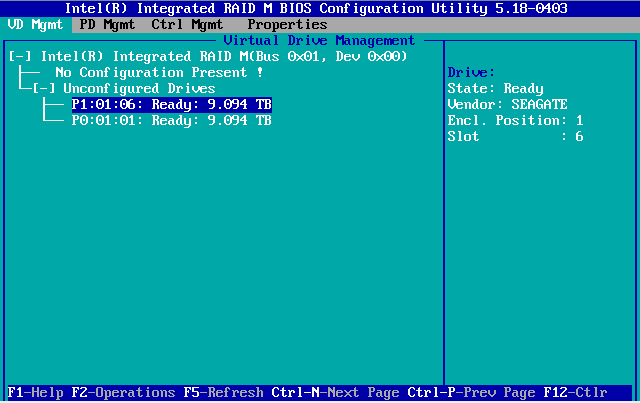

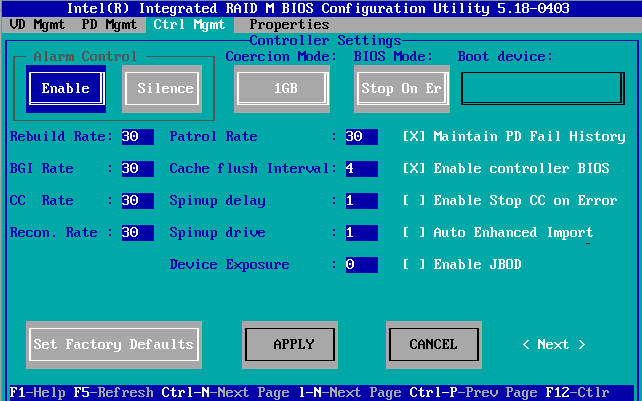

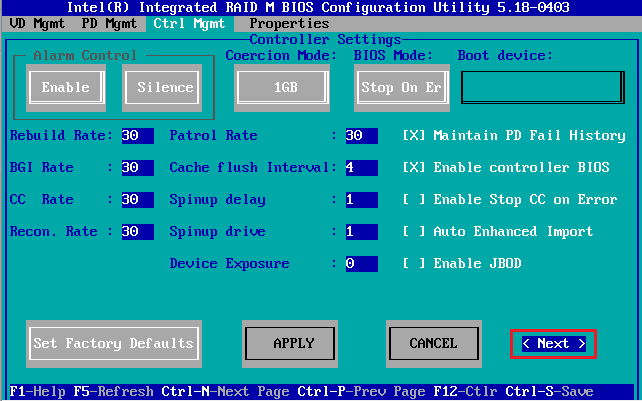

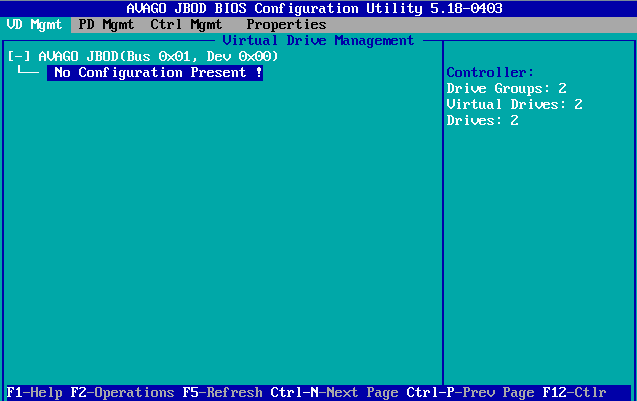

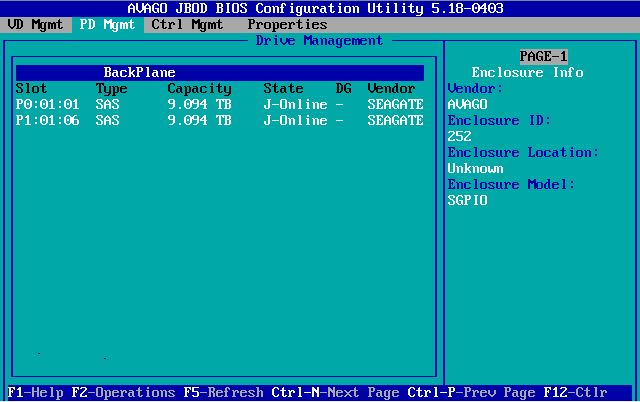

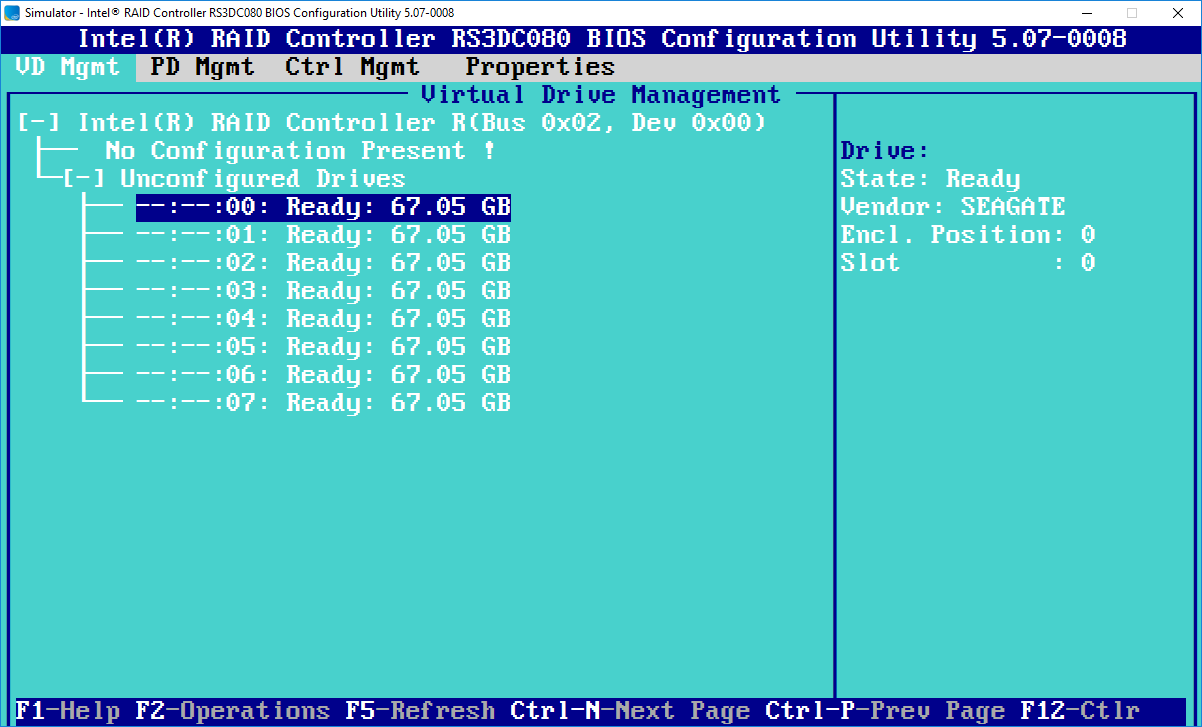

Driver SupportThis module is supported by the same LSI Hardware Family Driver as per the RS2BL080 and RMS25CB080, upgraded to support the new chipsets. Operating system migration between controllers is only possible if the new model controller has been present when Windows is booted, or if the Windows inbox driver supports the card. Only the Windows 2012 R2 or newer Inbox LSI Driver supports the RS3DC080 in emergency situations. RAID Web Console 2 version 15 or later should be used to perform management of this controller from within Windows. New Connector StandardThe mini-SAS HD Connector SFF-8643 supports 12Gbit/Second transfers. 12Gbit/second transfers also requires compatible devices and backplanes. The use of older devices or backplanes will result in a lower connection speed. Cables are available to connect SFF-8643 cards or modules to older SFF-8086 6Gbit/second backplanes. Revised RAID BIOSTo get into the new RAID BIOS, unlike previous generations which used CTRL+G, use CTRL+R during system POST.The new 12G Generation of LSI / Avago cards comes with a revised RAID BIOS which reverts from a GUI to a more text-based look. Most operations that were possible in the previous generation as still possible. Note the intructions on the bottom of the screen on how to move between tabs (CTRL+N / CTRL+P) and how to open the properties of an element, for example by pressing F2, for Operations.

Useful Links

Applies to:

Hardware RAID ModuleThe Intel RMS25CB080 is a hardware RAID controller. Unlike PCI Express Slot based controllers, this controller is described as a "module" and connects to a proprietary interface on compatible Intel Server motherboards. This module is a second generation SAS/SATA 6Gbit controller and is the successor to the RS2BL080 range of products. The RS2BL080 was a PCI Express Card based solution and the new module is designed to provide next generation performance at a lower price. The Module

The module has a warning buzzer as per previous products and two SFF-8087 connectors for drives. There is no IPMB/I2C connector to connect to an enclosure or backplane; rather drive LED management is done through sideband capable cables such as HPBLEA-179. Key Features:

RAID Module ConnectorCompatible motherboards include the Intel S1200V3RPL which has the module connector roughly in between the memory slots and the PCH. The approximate module location is shown below. Driver SupportThis module is supported by the same LSI Hardware Family Driver as per the RS2BL080, upgraded to support the new chipsets. Operating system migration between controllers is only possible if the new model controller has been present when Windows is booted, or if the Windows inbox driver supports the card. Only the Windows Server 2012 or newer Inbox LSI Driver supports the RMS25CB080 in emergency situations. RAID Web Console 2 version 13 or later should be used to perform management of this controller from within Windows. Useful Links

Applies to:

What is the difference between Enterprise and Consumer Desktop hard drives?Enterprise and Consumer (or ordinary desktop) hard drives are different in several key ways. We do not recommend the use of desktop hard drives in servers or mission critical systems at any time. Desktop, or Consumer Hard Drives The drives are suited to desktop workloads. They are not designed for heavy use, or 24x7 access. Cost optimised desktop drives are suited to single usage (i.e. there is one user using that machine, as opposed to servers where multiple access can be requested at the same time) and lower power draw. Desktop drives do not feature RAID protection features such as TLER (time limited error recovery). Enterprise Hard Drives Enterprise SATA and all SAS drives are designed for heavier usage and are built or tested with higher reliability in mind. Manufacturers of these drives usually quote higher reliability in the form of Mean time Between Failures (MTBF) and certify them for 24x7 access. Enterprise drives feature TLER. In the event of a media or other problem, the drive will only attempt to resolve the problem internally for at most 6 seconds. At the end of that time, the drive will hand over error management to the RAID controller. The drive will not go offline to complete "heroic recovery". This prevents the situation where a desktop drive could retry almost indefinitely to read the data. This would keep the RAID controller in a timeout state where it could not easily determine the state of the drive. This would introduce performance problems, as well as possibly eventually forcing the entire drive out of the RAID array even if it is just one sector that is damaged. Enterprise drives will attempt to stay online while the RAID controller can either remap the sector, or notify the user of a need to replace the drive at the nearest opportunity. Data remains protected especially if you are using RAID 6. Note: Seagate Constellation, or Seagate NS drives are example of Seagate Enterprise drives. Western Digital RE and RE4 drives are example of Western Digital's equivalent. WD Caviar Black drives feature a more reliable mechanism but are still designed for desktop use and do not feature TLER.

Applies to:

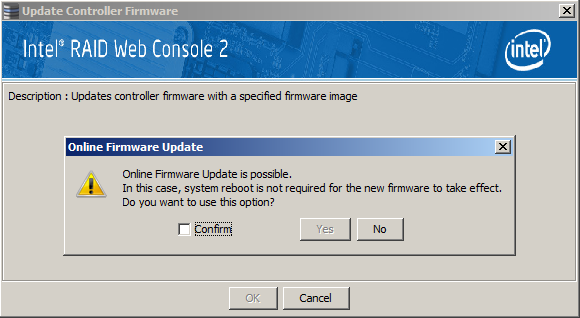

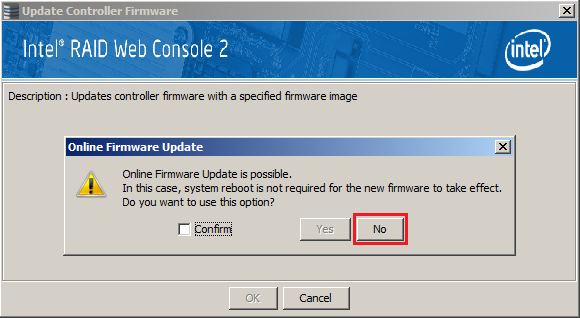

Updating RAID Controller or Module Firmware through RAID Web ConsoleIf you use the RAID Web Console / MegaRAID Storage Manager software to perform a RAID Controller update, you may be given the option of an online firmware update. This is a new feature that has been made available in RAID Web Console 2 Version 15 and newer. What Does This Feature Do?Normally when you flash the firmware through RAID Web Console, the firmware is uploaded to the controller, and then saved into the flash memory. However, the firmware upgrade does not actually take effect until the system is restarted. The online firmware update attempts to restart the controller with the updated firmware without needing to reboot the system. Is this Recommended?In most situations, no. This should definitely not be attempted if your operating system is running from the RAID volumes hosted by the controller, or if you have any mission critical system functions hosted by that controller, including virtual machines, backup volumes or file shares. There will be a brief loss of controller access, and Windows Blue-screen crashes have been observed when using the online firmware update feature. General Guidelines for Updating a System

Applies to:

RAID Capacitors and Smart Cache BatteriesThese batteries are used to protect the contents of a hardware RAID card's write cache from being lost, in the event of a power-outage.

Some RAID capacitors or cache batteries can be particularly expensive. In additional, many cache batteries have a finite life and you should expect to replace these every 3-5 years or so. More InformationHardware RAID cards can be configured to use their buffer in one of two ways:

In read and write cache mode, the contents of the write cache will be lost, i.e. not committed to disk, in the event of an unexpected power outage. The optional write cache battery or capacitor can protect the contents of this write cache for a limited period of time, usually 48 hours or less. When power is restored, the RAID controller will commit the contents of the cache to disk. However, your operating system will still have suffered from an unclean shutdown and there is no guarantee that your file system or application state will be any better than if a RAID cache battery was not fitted.

The above diagram shows that the RAID cache battery only protects a portion of the data flow from application to disk. Recommendations

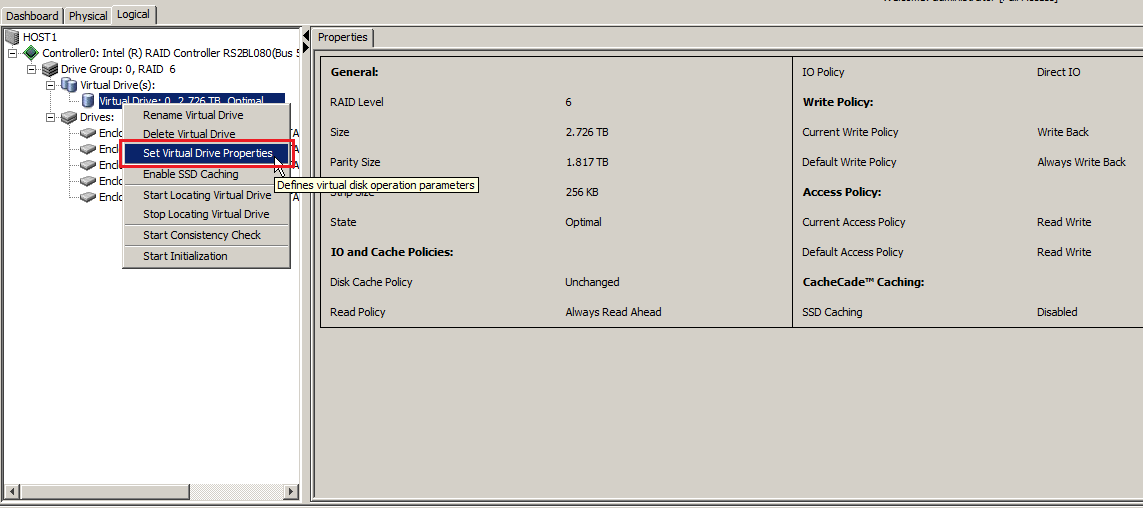

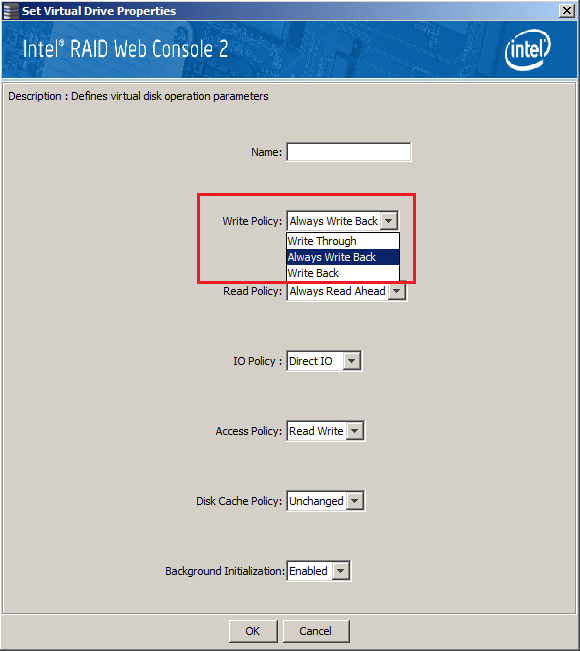

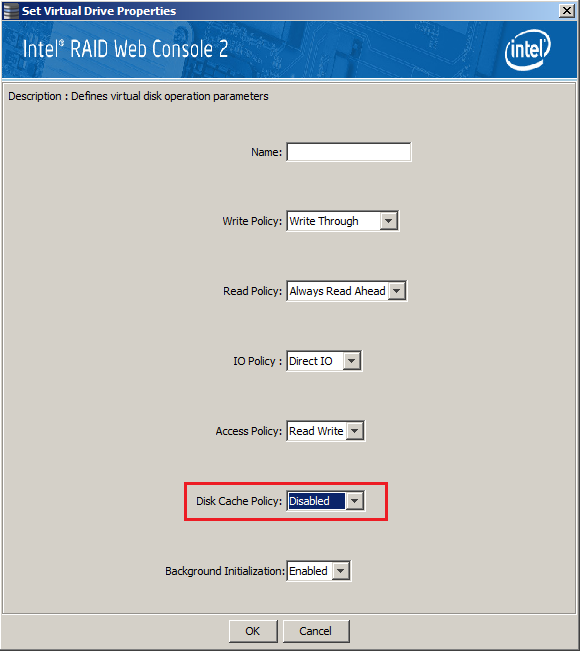

How to Turn off Write Caching at the Virtual Drive Level

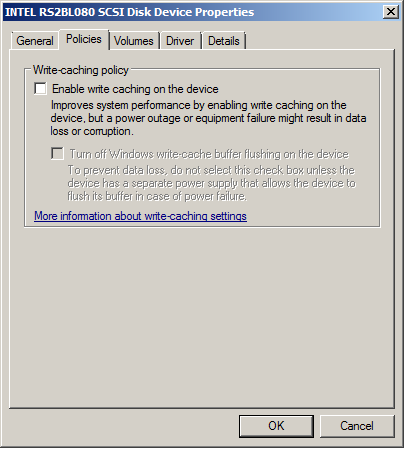

Further ConsiderationsDisabling Write Cache at the Operating System Level Many applications or systems attempt to turn off disk caching to provide security in the event of a power loss. For example, a Windows Domain Controller will turn off write caching where it can. However, when a Hardware RAID card is fitted, this does not guarantee that the hardware write cache either on the controller or the end disks will actually be disabled. Do not rely on this feature to provide data integrity in the event of an unplanned power loss. Windows Updates We recommend that you turn off Automatic Windows Updates, both on Host Virtualisation servers, and also the Guest servers. Systems should be patched regularly as part of your system maintenance plan, rather than automatically. This ensures that the overall shutdown time when the UPS battery is low, is relatively consistent, ensuring a clean shut-down. Applies to:

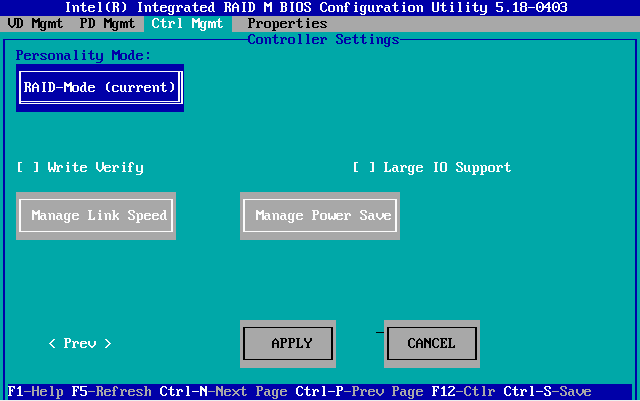

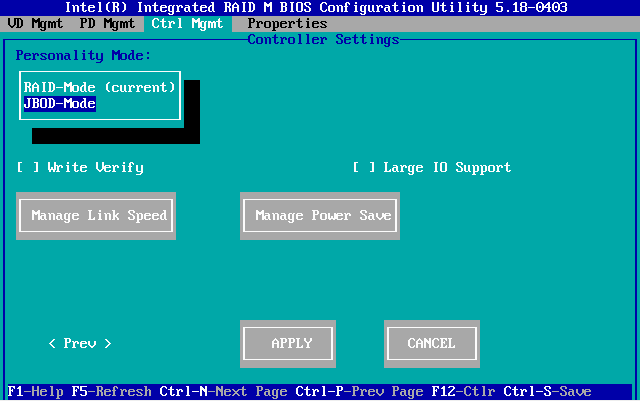

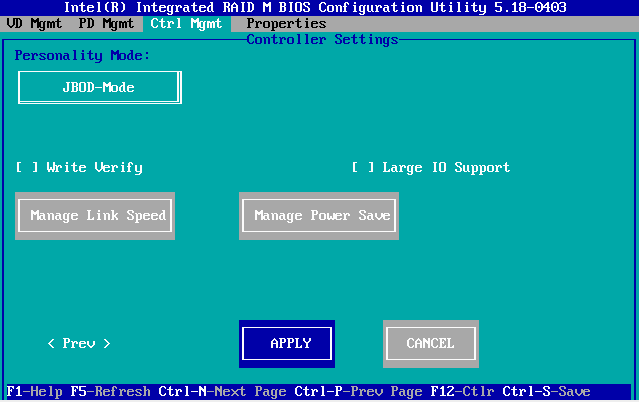

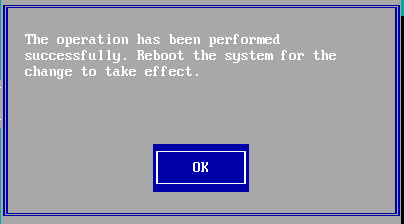

What Is JBOD Mode?JBOD stands for "just a bunch of drives". JBOD mode passes through physical disks so that the operating system or host can see each individual drive. This is the opposite of a normal RAID controller, which groups physical disks together to form a single, often larger or fault tolerance, virtual drive. JBOD mode is useful in some software storage solutions, such as Storage Spaces Direct, which require direct access to individual disk drives, rather than a RAID array. Do All SAS Adapters Support JBOD Mode?Not all adapters (HBAs) do, but many support it to a varying degree.

How Do I Enable JBOD Mode on Intel 12G SAS Adapters?This involves changing the personality of a 12Gbit RAID adapters to turn off all RAID functionality and only enabling JBOD mode. The example below is from an Intel RMS3AC160 adapter but the instructions apply to most other RAID modules and RAID PCIe cards in the same series. Note: Enabling JBOD personality mode will destroy and RAID volume on the disks. You should be able to move JBOD disks between JBOD personality adapters without data loss, but always make sure the HBA is in the right personality mode first, and ideally all adapters sharing disks should be at the same firmware level. In a new deployment, always deploy the latest HBA firmware before connecting the disks.

Steps:

More Notes

Note: If you are using an Intel server platform that uses a motherboard such as the S2600WTTY or S2600WTTYR and cannot see or access the RAID BIOS options, first of all ensure that Quiet boot is turned off the main BIOS. If this does not help, ensure the BIOS is set for Legacy boot instead of UEFI boot. (Setup Menu > Boot Maintenance Manager > Advanced Boot Options > Boot Mode

Applies to:

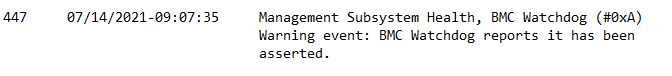

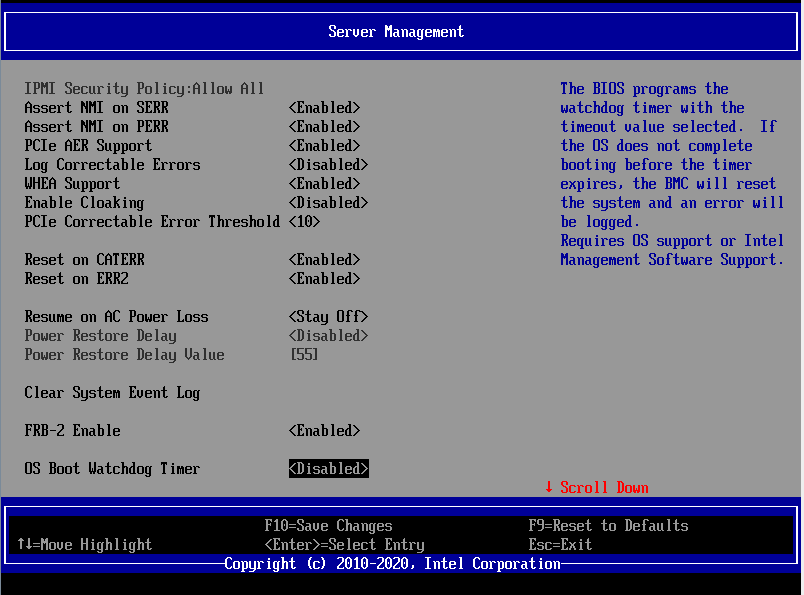

BMC WatchdogThe BMC Watchdog is a function of the BMC to help ensure that servers boot into the operating system:

Note: Using the UEFI Shell does not count as a valid OS boot. In this situation, the system may reboot when BIOS updates are being performed. It is highly recommended that you turn off the watchdog functionality before attempting to use the UEFI Shell to perform BIOS updates.

How To Turn Off the BMC Watchdog

Note: Some Intel BIOS versions may turn on BMC Watchdog functionality whereas previously it was disabled. We always recommend you check the OS Boot Watchdog timer setting after upgrading a server system BIOS.

Applies to:

There are several different RAID levels available to servers (and even desktop PCs) with today's technology. These offer varying features to either enhance performance or reliability, or sometimes a combination of both. Use the guide below to choose the RAID level that suits your needs. All quoted usable capacities are approximate.

RAID 0 - StripeRAID 0, also known as striping leads to improved performance as the workload is shared between two or more drives. However it has no fault tolerance. If any of the member disks develop a problem the RAID may fail and data corruption or loss is likely. Example: Two 500GB drives in a RAID 0 gives a usable capacity of 1TB (1000GB). RAID 1 - MirrorThe mirror has improved fault tolerance over RAID 1. Each drive is a duplicate copy. The system can benefit from improved read speeds with a controller which can read alternate blocks from each drive at the same time. Write performance is the same as a single drive, since write operations must be duplicated. With RAID1 the capacity of the RAID is halved. Example: Two 500GB drives in a RAID 1 gives a usable capacity of 500GB. RAID 5 - Stripe with ParityRAID 5 used to be the common RAID standard for servers. Write and read performance is improved with each additional disk or spindle that you add to the RAID. You lose the capacity of one drive, since one drive contains parity information. (Technically, the parity information is distributed across all of the drives, however you do loose one drives worth of overall capacity). You need a minimum of 3 hard drives for a RAID 5. The disadvantage of RAID5 is that if you suffer an outright hard drive failure, the remaining drives need to be in perfect working order with no bad blocks. RAID6 gets around this problem. RAID 5 is computationally expensive as parity must be calculated. Therefore it is slower than RAID 0. Example: Three 500GB drives in a RAID 5 gives a usable capacity of 1TB (1000GB). RAID 6 - Stripe with double parityRAID 6 takes RAID 5 one level further with two lots of distributed parity. You need a minimum of four hard drives and will lose the capacity of two. RAID6 has even more computational requirements over RAID5 and for this we recommend a dedicated hardware RAID controller that has the offload capabilities. RAID 6 can suffer two hard drive failures and preserve the data. Typically if you have one outright hard drive failure, you can still rebuild the RAID array even if bad blocks are encountered on other drives during the rebuild, as long as the bad blocks do not appear in the same location on multiple drives. RAID 6 has improved reliability against RAID5 and is recommended for all general application and file storage requirements, including VMWare datastores. Example: Four 500GB drives in a RAID 6 gives a usable capacity of 1TB (1000GB). Example: Five 500GB drives in a RAID 6 gives a usable capacity of 1.5TB (1500GB). When a drive fails in a RAID6 array the entire RAID6 pack needs to be rebuilt. This involves reading the contents of all remaining working disks to write the contents of the newly fitted replacement disk. This can lead to degradation in overall system performance for an extended period of time whilst the rebuild is carried out. In this situation, RAID 10 can give improved rebuild times and greater overall performance, but with the negative impact of increased cost or reduced capacity. RAID 10, RAID 50 and RAID 60These RAID levels take smaller stripes (RAID 0) and then RAID these together either with RAID 0, RAID 5 or RAID 6. Because of the increased (doubled) hard drive count, performance can be impressive. However high cost is a negative factor with these RAID levels. RAID50 can only withstand a fault in one stripe at a time so if you need an ultimate performance storage system we recommend RAID6. Example: Six 500GB drives in a RAID 50 gives a usable capacity of 2TB (2000GB) (RAID 5 of three 1GB stripes) Example: Eight 500GB drives in a RAID 60 gives a usable capacity of 2TB (2000GB) (RAID 6 of four 1GB stripes) JBODJust a Bunch of Drives refers to a collection of drives which are either not configured in a RAID - so the individual drives are accessible as separate volumes - or it can refer to storage array units which have no inbuilt RAID functionality, but are designed to connect to an upstream RAID controller. For example, you may have a SAN array which will have an inbuilt RAID controller. Capacity can be expanded on some models by adding a JBOD array. The JBOD has no RAID functionality but the drives will seen, managed and incorporated into a RAID configuration by the main controller in the upstream SAN array. RAID 5 vs. RAID6As above, RAID6 gives an additional level of protection against drive failures, due to the extra copy of parity information. However, this can come at the cost of performance as RAID6 places a greater load on the RAID controller. Using a powerful hardware RAID controller (such as the second generation SAS 6G controllers) can provide good RAID6 performance and this is situation RAID6 is strongly recommended. Some customers have historically used RAID5 plus a hot-spare disk and this can be turned into a reliable RAID6 setup without loss of capacity or increase in cost. Recommendation: Contact Stone support for more information on deciding which RAID level is right for you. Some specific applications may request RAID0 for application log files; if this is the case this should be a separate volume to your operating system, which should never use RAID 0.

Applies to:

|

.PNG)

.PNG)

.PNG)

.png)